Overview

Something I did with a friend of mine as an example of a distributed system for an University course.

A threat intelligence sharing network with RAFT consensus algorithm and LLM generated executive summaries of threat data.

A federated service (that can be switched to another instance) collects data from known threat feeds, manages LLM connections and connects nodes to network. Nodes within network implement their own threat feed integrations and share that data with each other, operating independently even in cases where federated services are down.

All the performance critical components were written in Rust, and rest was done using Python.

Features

- Single feed aggregator reading from known threat intelligence sources.

- Communication: Secure channels (TLS) and message queues for multi-organization data.

- Time & Consensus: Achieve consensus on new threat indicators to prevent tampering

- Non-Functional Requirements: High reliability, robust security and scalability

- Replication and consistency: Maintain identical threat databases at all participant nodes

- Resource management & Scheduling: Balance load for analyzing huge logs from each partner

- System Architecture: Microservices for data ingestion, verification, distribution etc.

- Middleware: Role-based access control, encryption, logging

- Big Data Processing: Analyze large scale threat patterns historically

- LLM features: generate executive summaries of the most recent threats

- Edge-Cloud Continuum: Local threat detection at each organization’s edge; aggregated intelligence in the cloud

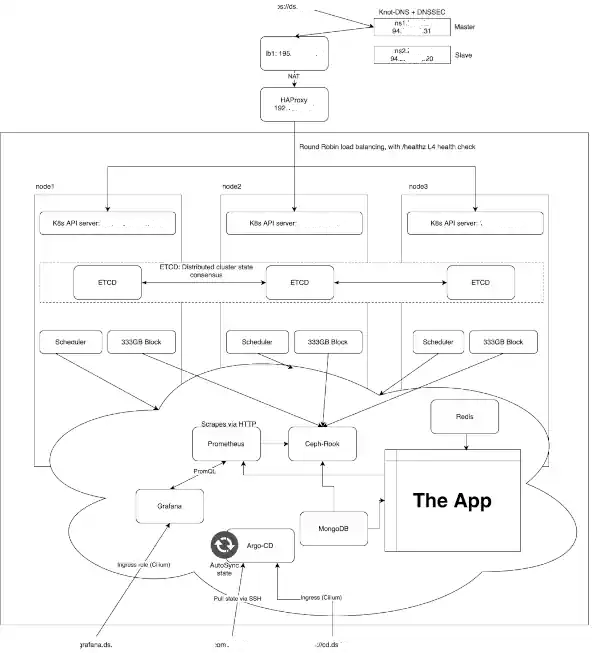

Cluster

Following Kubernetes cluster was used during development:

Components

| Component | Description |

|---|

| ArgoCD | Continuous delivery tool for managing Kubernetes deployments |

| Cilium | Networking and security plugin for Kubernetes |

| Grafana | Dashboard and visualization platform for monitoring |

| MongoDB | Document-based NoSQL database for storing data |

| Prometheus | Monitoring and alerting system for clusters |

| etcd | Consensus on threat data |

| Rook Ceph Cluster | Manages a Ceph storage cluster for distributed persistent storage |

| Rook Operator | Operator for orchestrating Ceph cluster operations |

| Threat Processor | Processes threat intelligence data for analysis |

| Threat Reporting | Generates reports on detected security threats |

| Node Core | Handles communication between nodes and consumes data |

| Node Edge | Generates data and sends it to node core |

Architecture

Sadly architecture diagrams have been lost in time, but essentially the system consists of Federated Services and Nodes.

Federated Services

Federated Services can be considered as a “service provider” part of the system.

The idea behind federated services is that if the federated service in place X goes down, another instance can potentially take over in place Y without massively disrupting the overall system.

Federated Services also handle the most heavy and resource intensive operations of the system to avoid taxing nodes too much.

These operations are pulling threat data from online feeds and handling LLM connection.

LLM operations could have potentially been handled in nodes themselves as well, but due to token limitations and the scope of this project a decision was made to offload it to federated services.

Threat Processor

Threat Processor collects threat data from online sources and passes it to nodes.

It consists of Aggregator that pulls data from threat feeds and Normalizer that standardizes the received data.

Processor is modular in a way that it is fairly simple to add new threat feeds to system by implementing new Aggregator and Normalizer modules to threat_processor part of the services.

Threat Reporting

Threat Reporting sends received threat data to OpenAI for formatting of clear-text, executive style, reports and shares them with nodes by using Redis cache. Reports are also stored to MongoDB for later viewing.

It consists of Daily Processor (scheduler of the service), LLM Batch Processor (batches reports and sends them to OpenAI), Redis Processor (handles passing threat data and reports to/from nodes) and Storage Service (handles MongoDB crud operations).

Node Services

Node Services are the “clients” of the system, as in nodes are the part that are installed in different organizations.

Each node consist of Core Services and Edge Services. Core Services handle the connection to federated services and other nodes, while Edge services’ purpose is to make it possible to integrate node to external systems.

E.g. organization could have Suricata or SIEM system running in their network and they can implement their own integration using the Edge Services to get their data to Node and share it with the distributed system.

Node architecture is designed in a way that they can continue working independently as part of the distributed system if Federated Service provider goes down.

Core Services

Core Services consist of report consumer (that connects to Threat Reporting system via Redis to receive daily reports generated from threat data) and Threat Service that handles consensus (using etcd) and communication between nodes, local MongoDB storage and connection to Edge Services.

The caveat of Node’s Core Services is that etcd has a maximum size of 7 nodes per cluster. For the scope of this project it wasn’t taken into account, but adding a pooling system to nodes for future expandability would be a possibility.

Edge Services

Edge Services’ implementation resembles the Threat Processor of Federated Services, as it consists of collector and normalizer. In addition to those it has connector to handle communication with Core Services.

New integrations can be implemented by adding new collector and normalizer modules. These integrations could be used for connecting with internal security systems, such as IDS or SIEM.